Oxford Spires Dataset

Yifu Tao, Miguel Ángel Muñoz-Bañón, Lintong Zhang, Jiahao Wang, Lanke Frank Tarimo Fu, Maurice Fallon

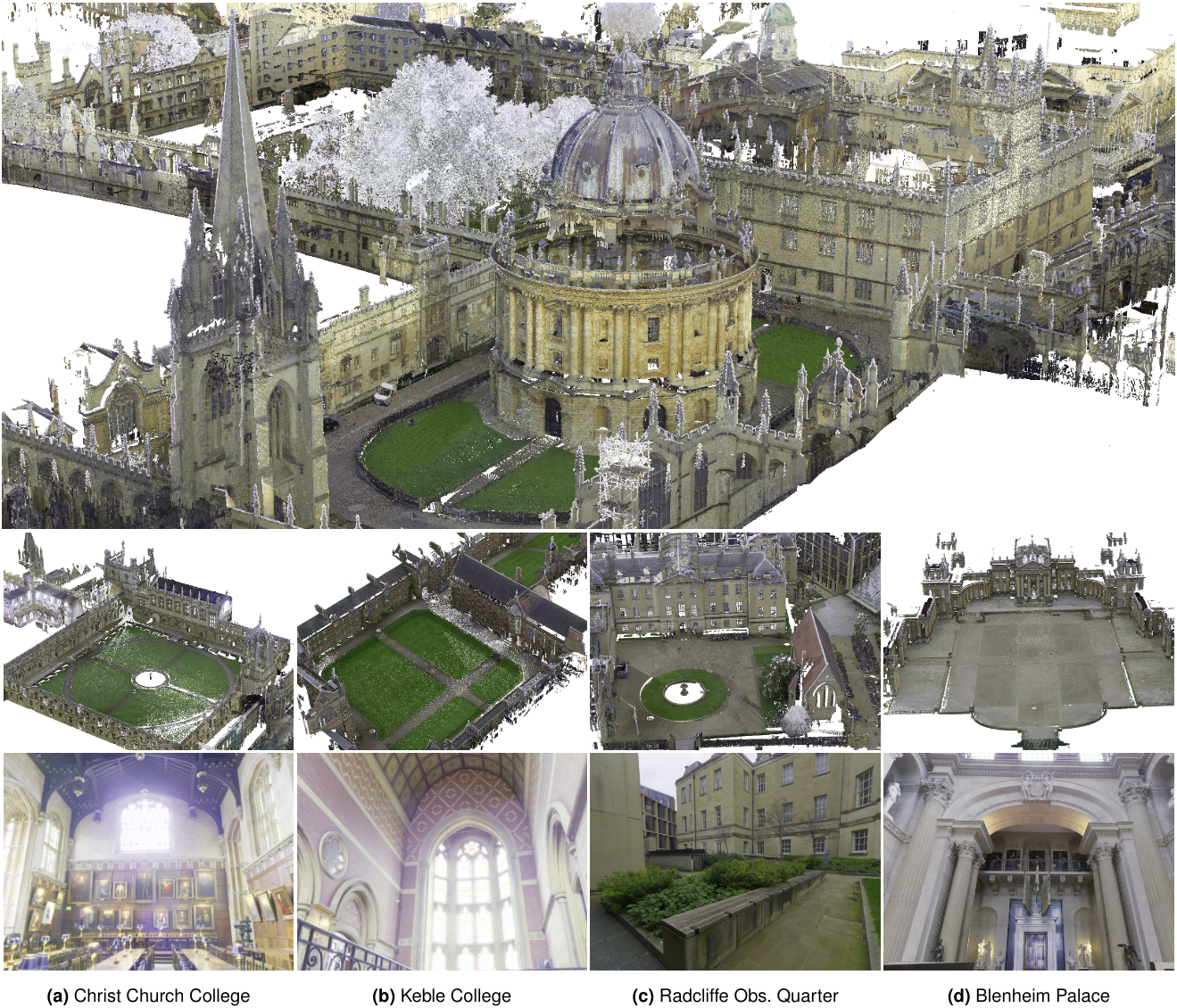

We present the Oxford Spires Dataset, captured in and around well-known landmarks in Oxford using a custom-built multi-sensor perception unit as well as a millimetre-accurate map from a terrestrial LiDAR scanner (TLS). The perception unit includes three global shutter colour cameras, an automotive 3D LiDAR scanner, and an inertial sensor — all precisely calibrated.

News

(12 May 2025) Paper accepted for publication in the International Journal of Robotics Research (IJRR).

(11 May 2025) Dataset available at Hugging Face.

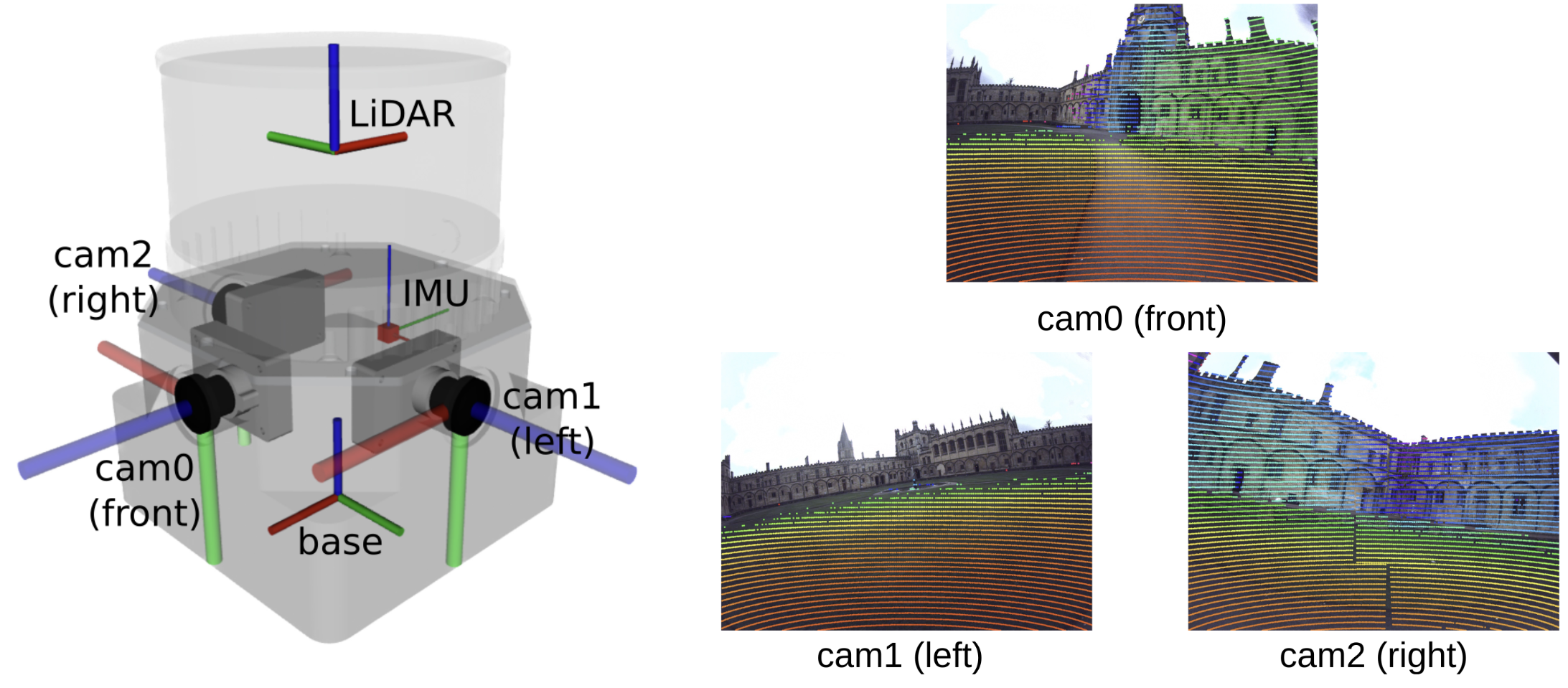

Handheld Perception Unit

Our perception unit, Frontier, has three cameras, an IMU, and a LiDAR. It is shown in the figure above. The three colour fisheye cameras face forward, left, and right. The LiDAR was mounted on top of the cameras. In the table below, we show the specifications for each sensor.

| Sensor | Model | Rate | Notes |

|---|---|---|---|

| LiDAR | Hesai QT64 | 10 Hz | 64 channels, 60 m range, 104° FoV, ±3 cm accuracy |

| Cameras (×3) | Alphasense Core Development Kit (Sevensense Robotics AG) | 20 Hz | 1440 × 1080, 126° × 92.4° FoV, global shutter, colour fisheye |

| IMU | Alphasense Core Development Kit (Sevensense Robotics AG) | 400 Hz | Cell-phone grade MEMS, hardware-synchronised with cameras via FPGA |

➡️ Read more: See the the Sensors wiki page for information on ROS topics, synchronisation and calibration.

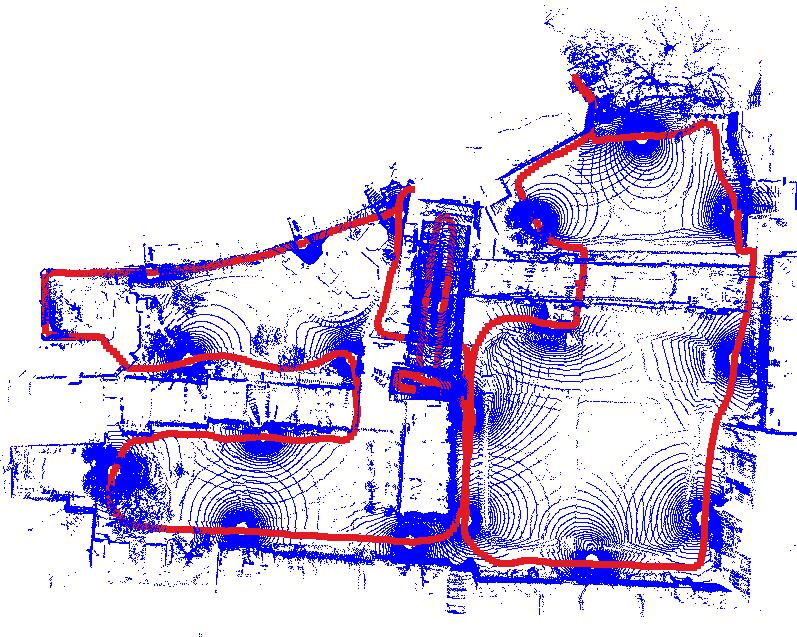

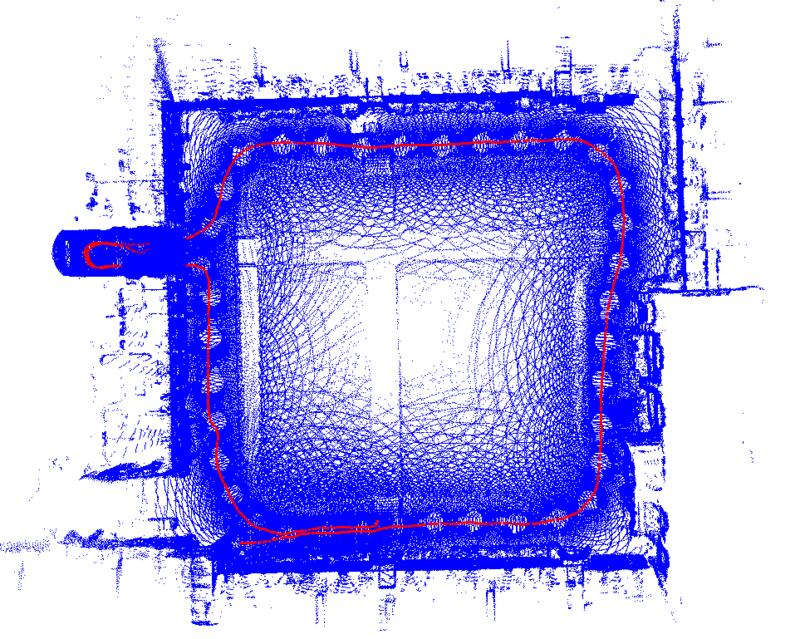

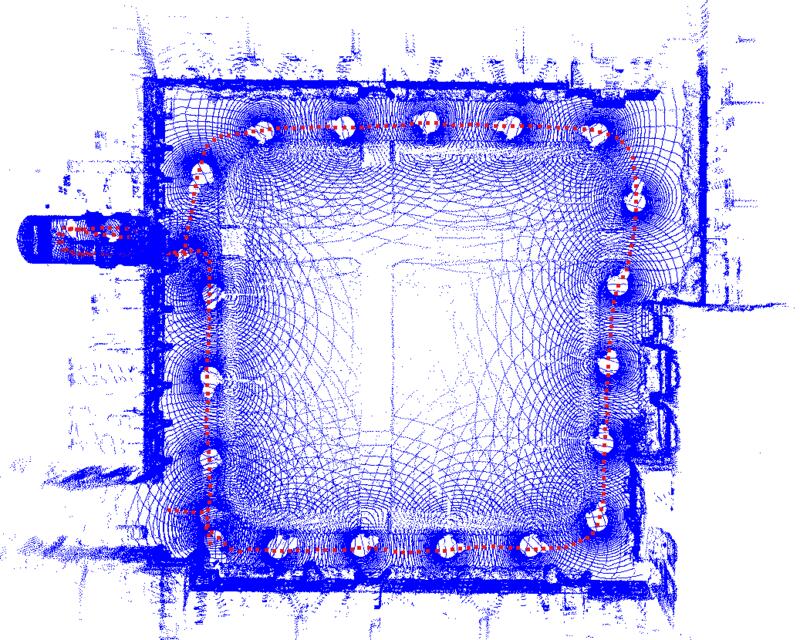

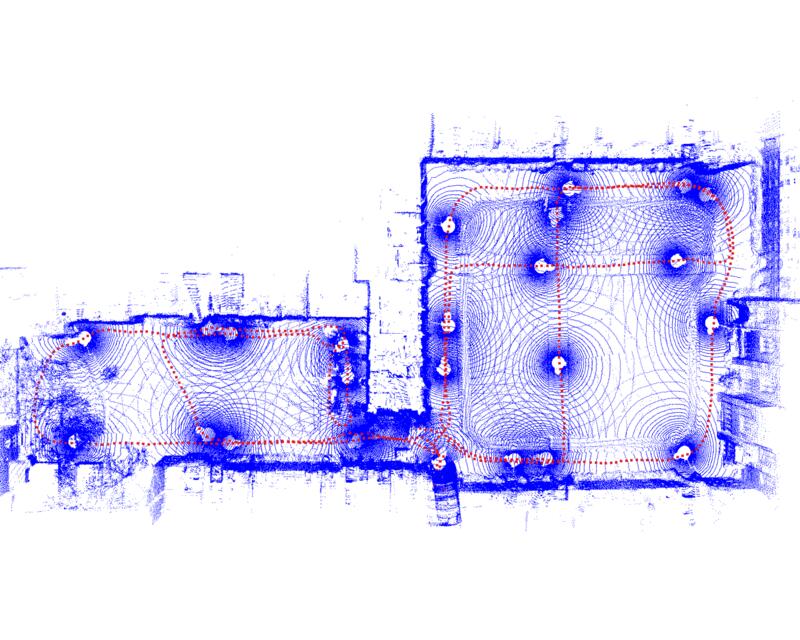

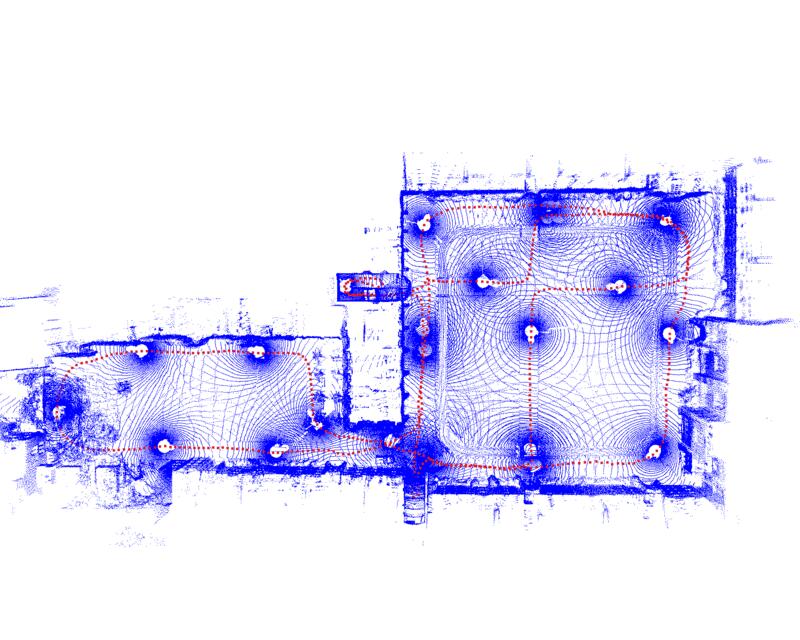

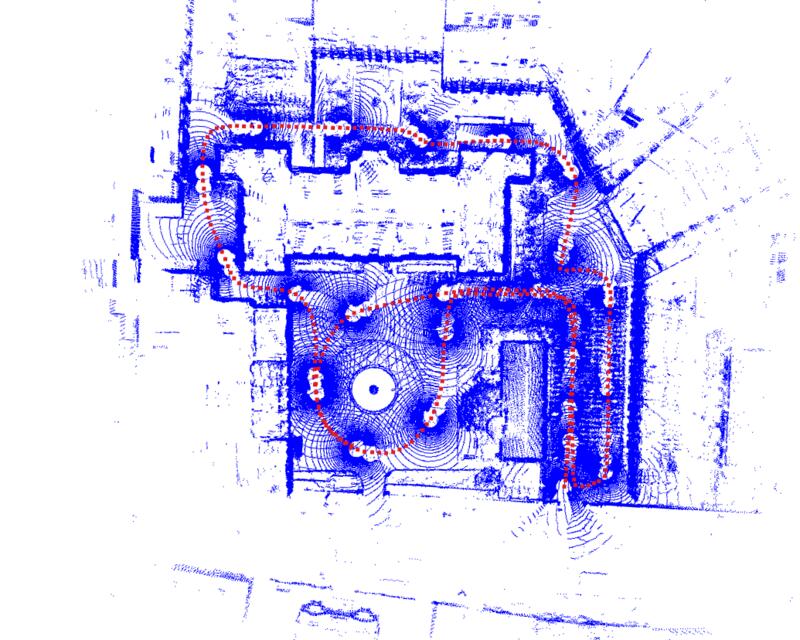

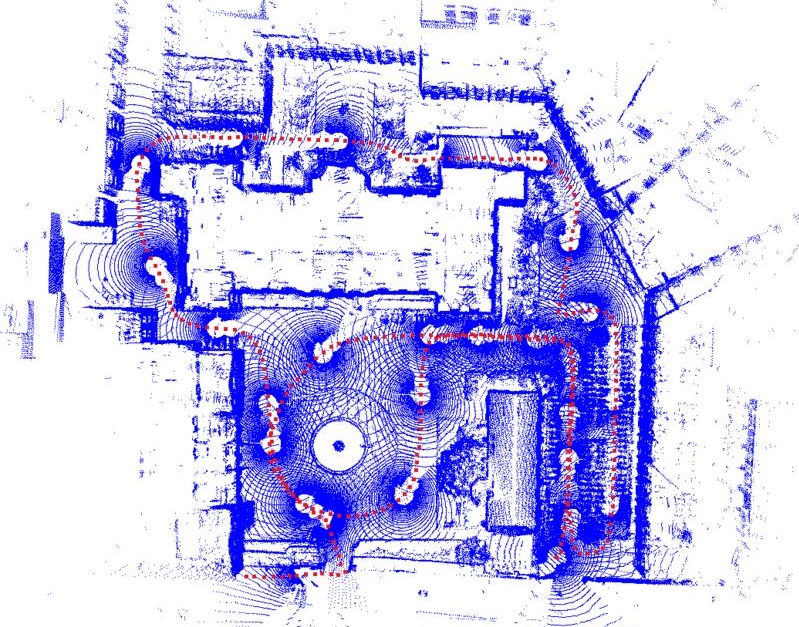

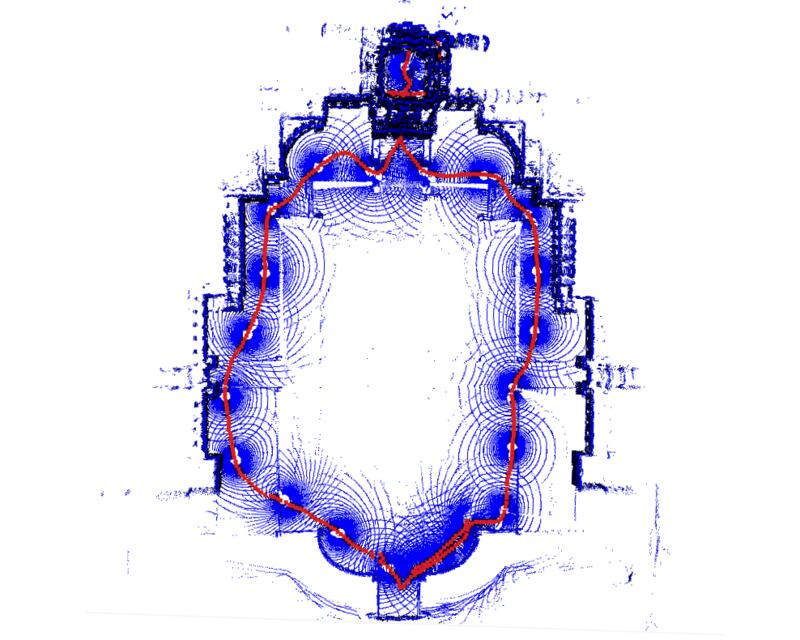

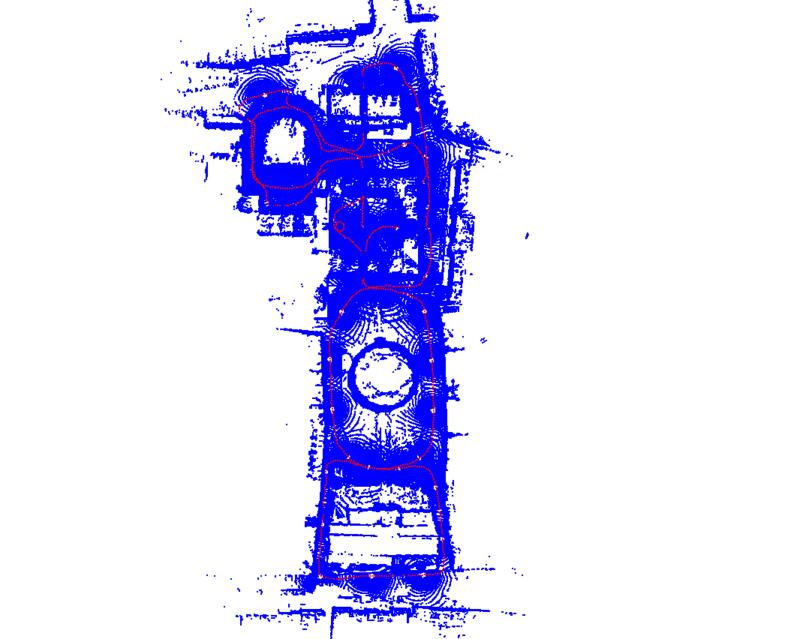

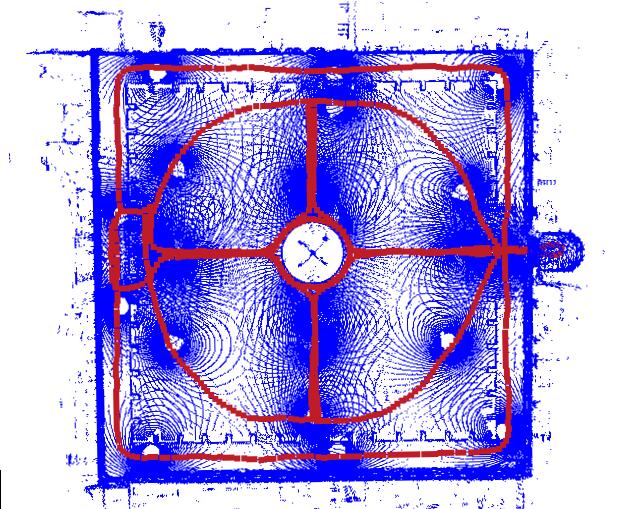

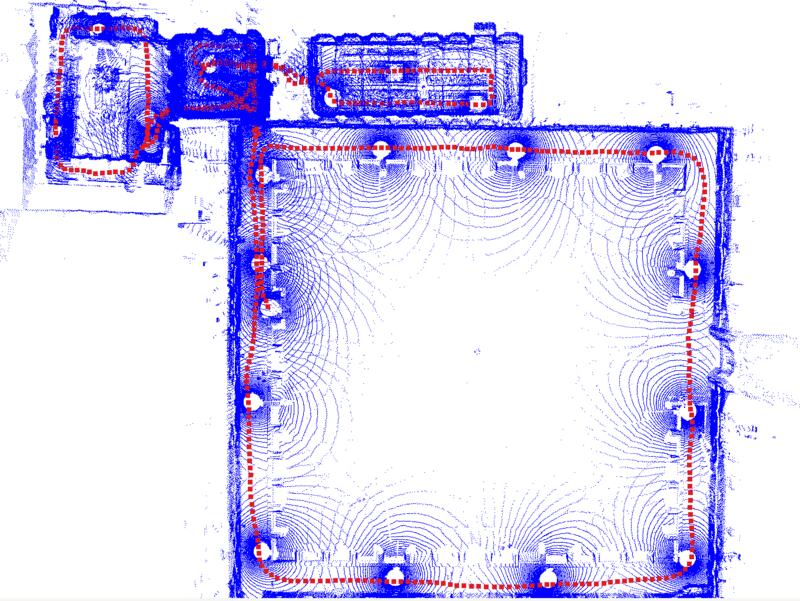

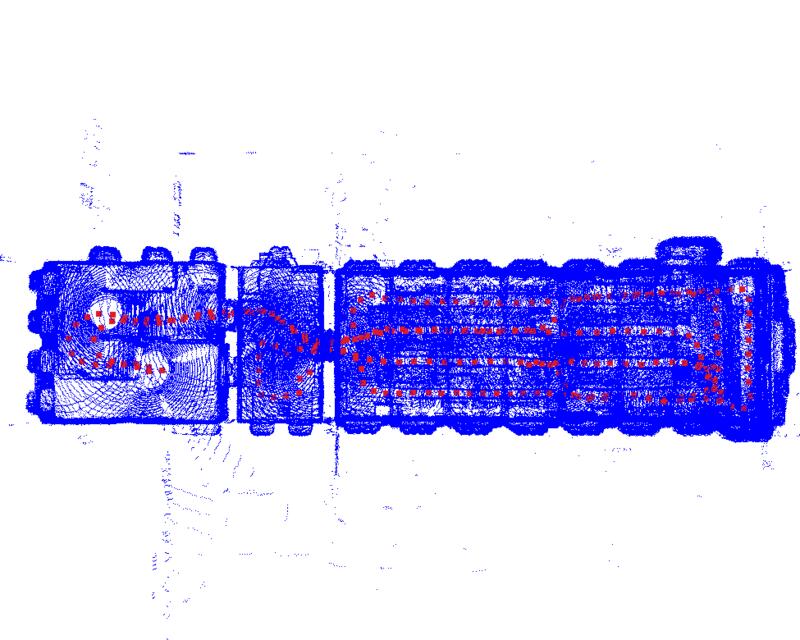

Sequences

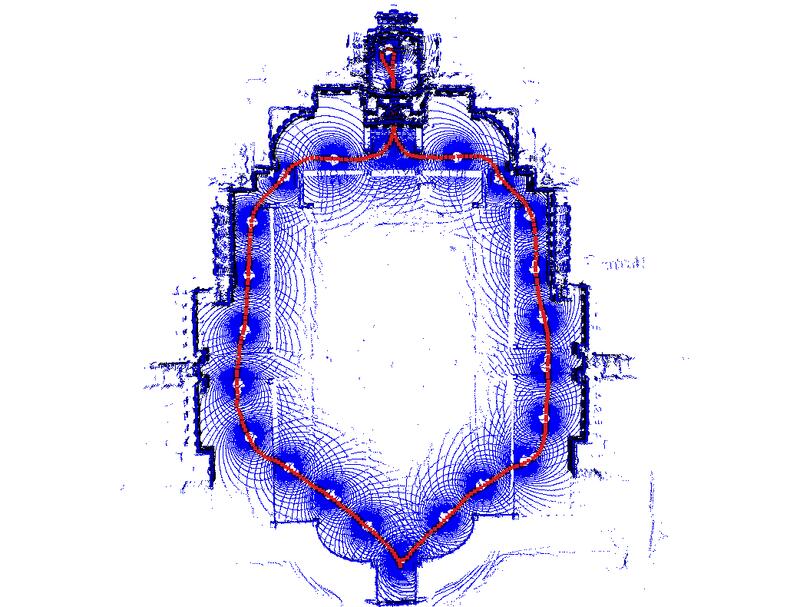

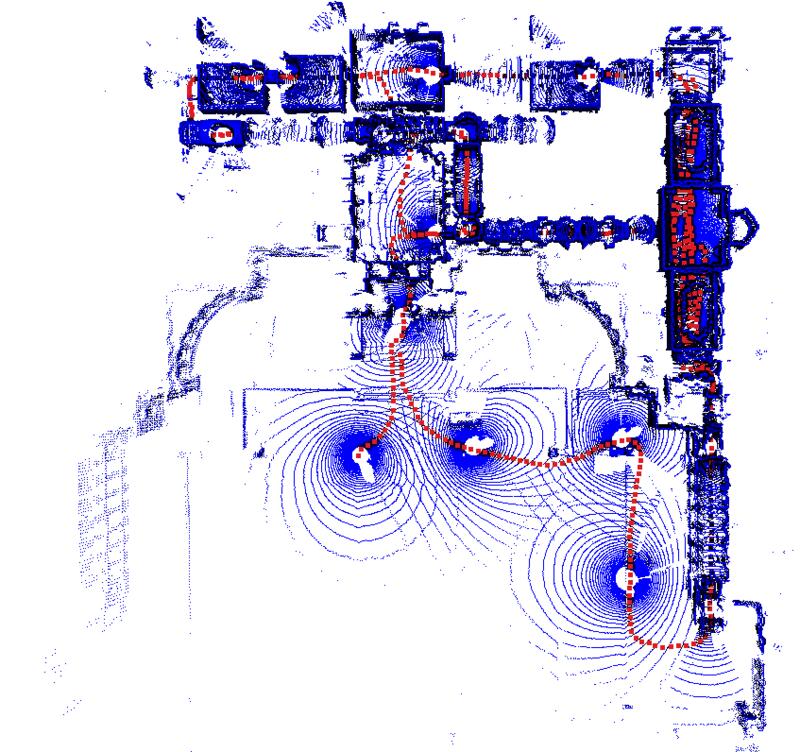

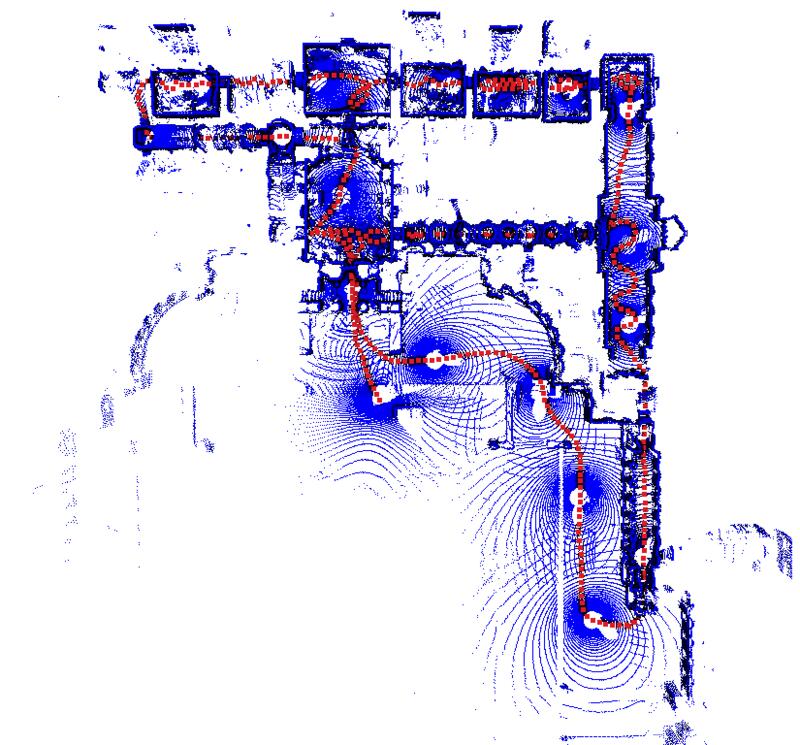

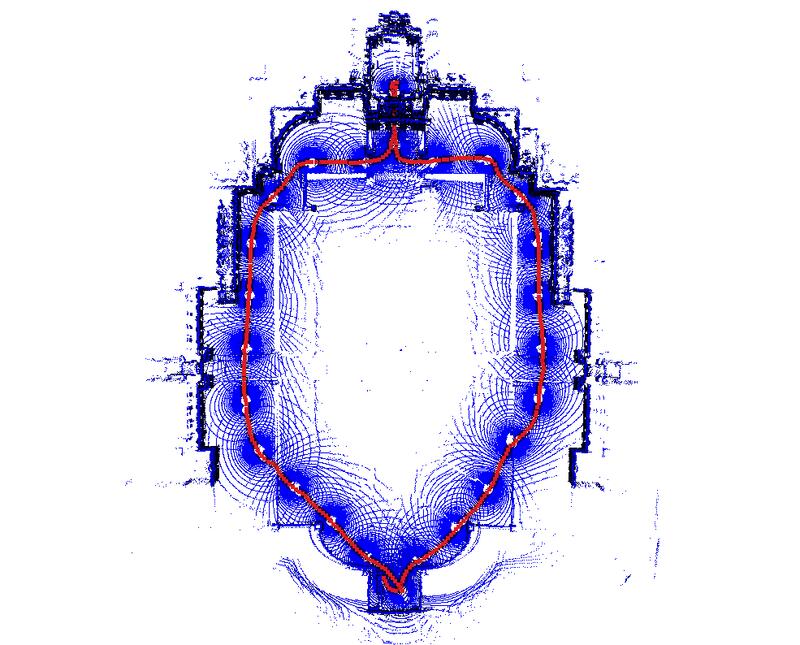

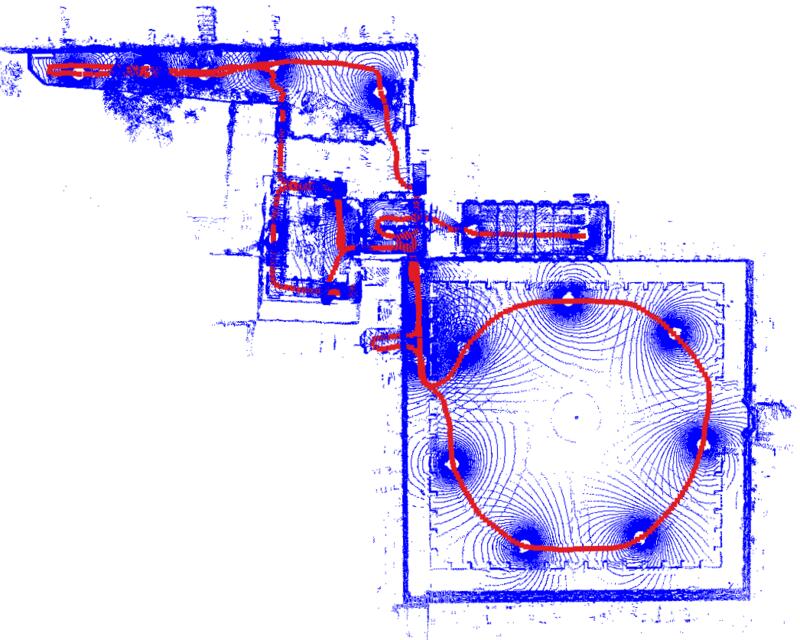

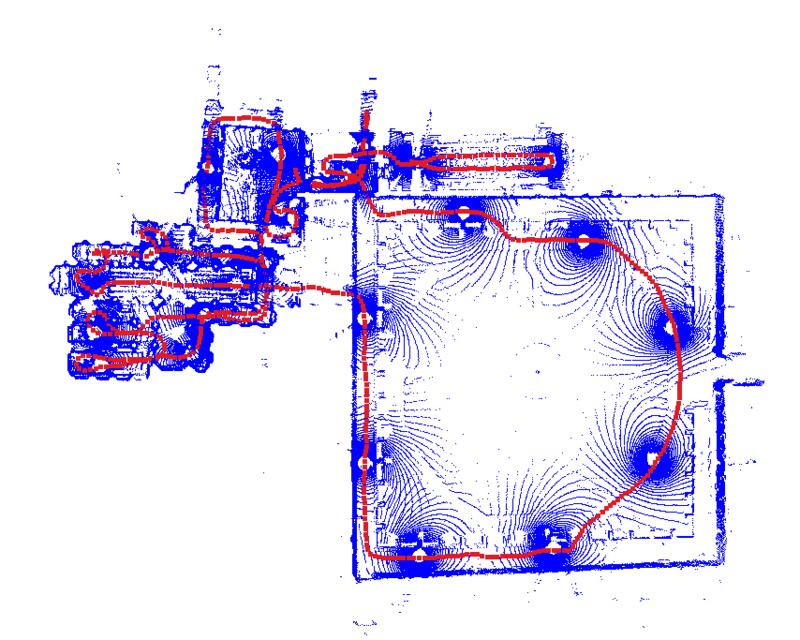

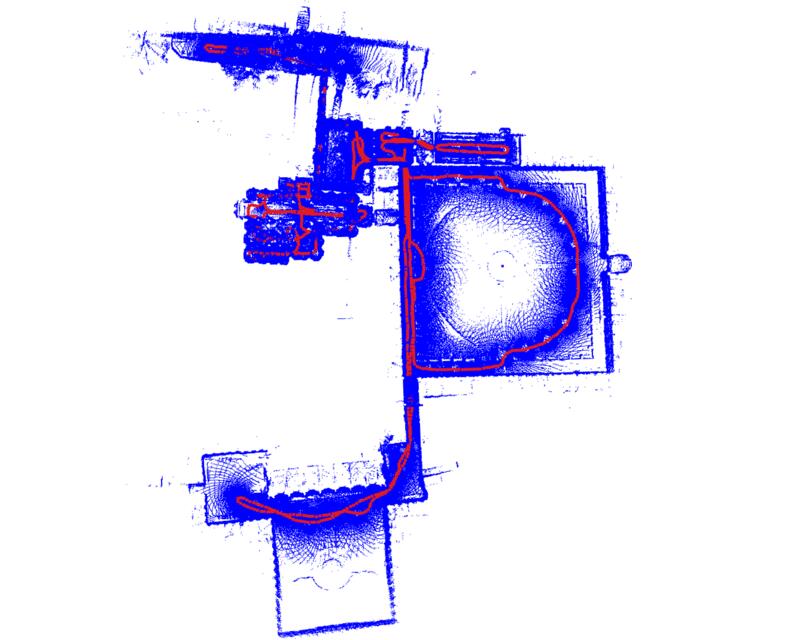

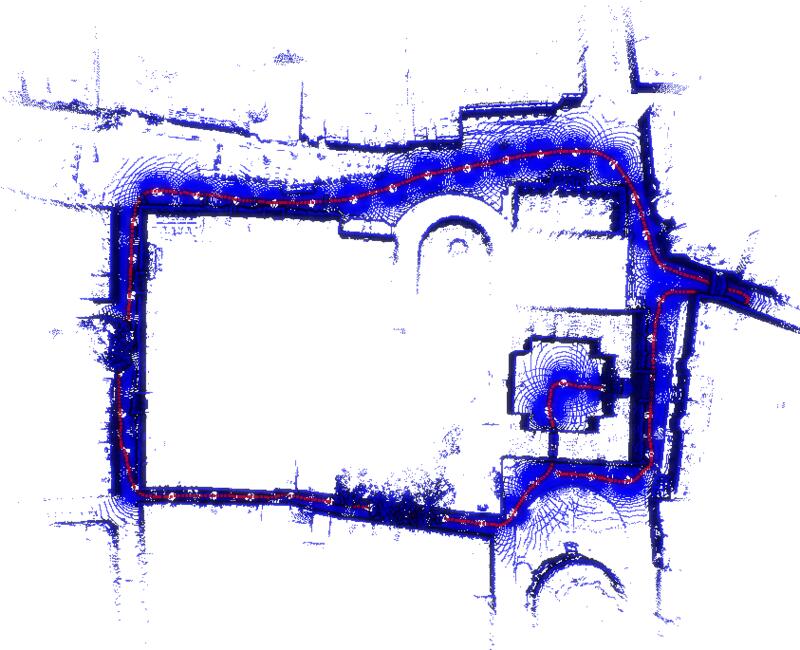

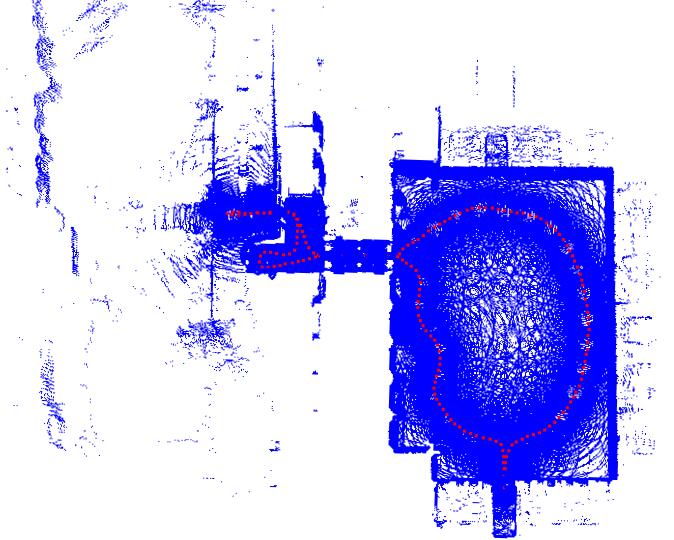

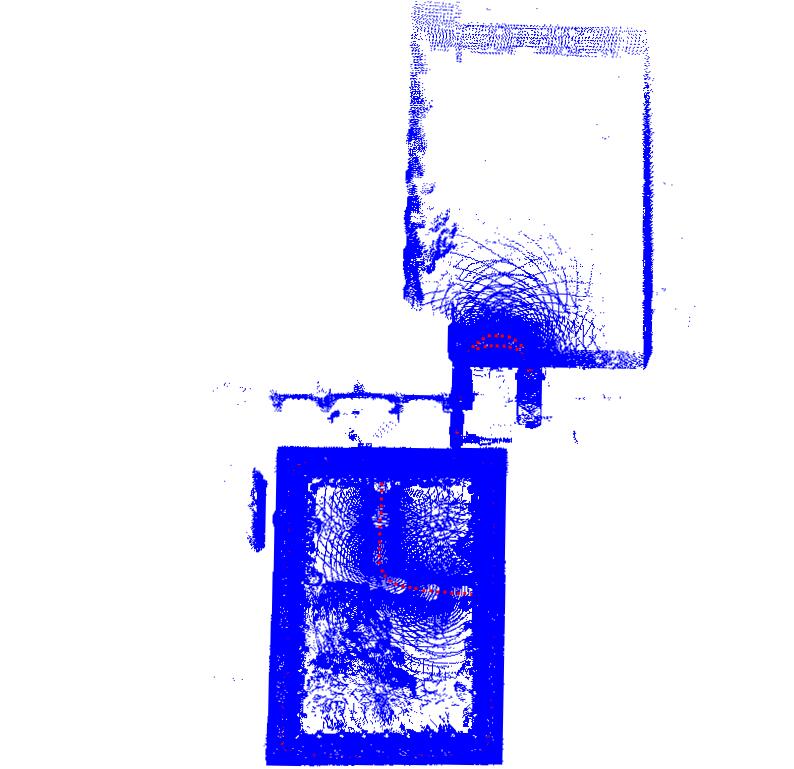

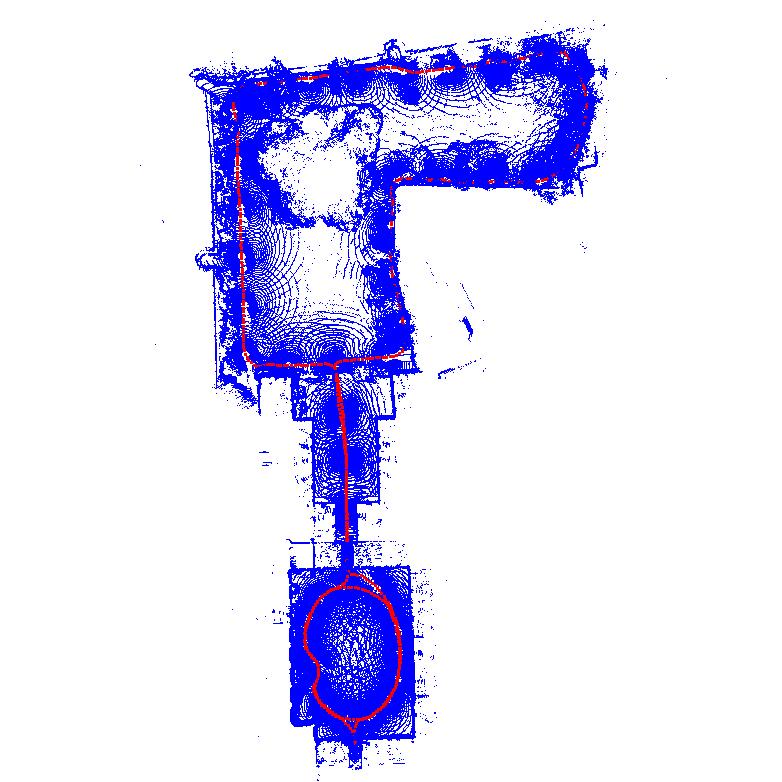

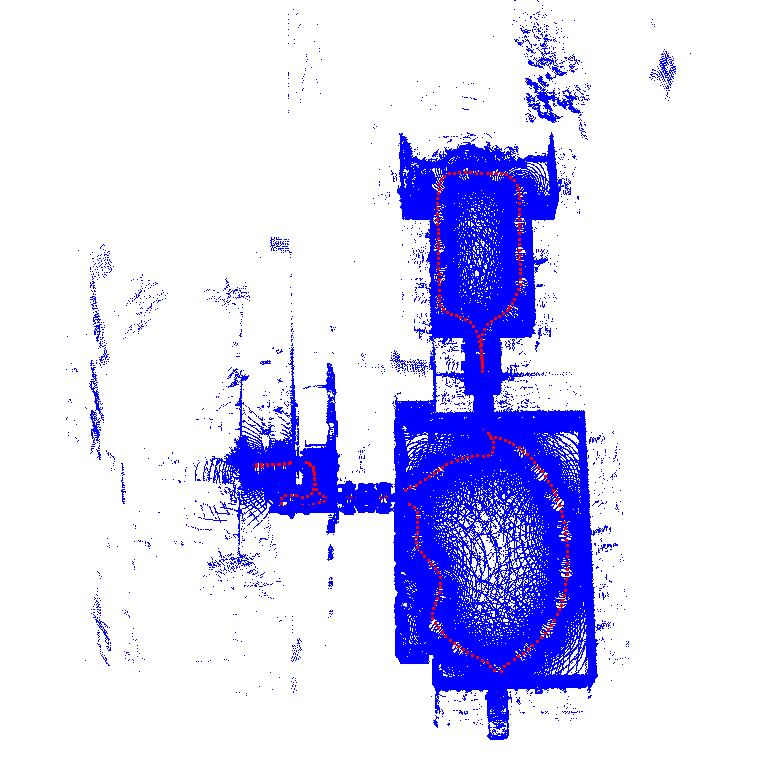

The Frontier device was carried in a backpack (figure below) through multiple sites to record the 24 sequences below.

Among the 24 sequences, core sequences (highlighted in green, also listed here) have ground-truth LiDAR trajectories and are used for the localisation benchmark. Each card shows the trajectory image and is tagged with badges showing the type of environments:

Ground Truth

Using a millimetre-accurate TLS (Leica RTC360), we provide the following reference ground truth:

- Ground Truth 3D Map: The TLS point cloud map is provided as the ground truth for evaluating 3D reconstruction

- Ground Truth Trajectory: we register the Hesai LiDAR point clouds to the TLS map to provide centimetre-accurate ground truth pose for evaluating localisation. Note that each pose gives the pose of the base frame in the world frame.

➡️ Read more: See the Ground Truth wiki page.

Dataset Structure

oxford_spires_dataset/

├── sequences/<sequence-name>/

│ ├── raw/ # ROS bags, cam0–2 images, lidar-clouds/, imu.csv

│ └── processed/ # vilens-slam/, colmap/, trajectory/

├── ground_truth_map/<site-name>/ # TLS point clouds and survey report

├── calibration/ # intrinsics, extrinsics, and raw calibration sequences

├── reconstruction_benchmark/

└── novel_view_synthesis_benchmark/

➡️ Read more: See the Dataset Structure wiki page.

GoogleDrive

Code

We have a Github repository which provides software to download the data and run the localisation, reconstruction and novel-view synthesis benchmarks.

Citation

@article{tao2025spires,

title={The Oxford Spires Dataset: Benchmarking Large-Scale LiDAR-Visual Localisation, Reconstruction and Radiance Field Methods},

author={Tao, Yifu and Mu{\~n}oz-Ba{\~n}{\'o}n, Miguel {\'A}ngel and Zhang, Lintong and Wang, Jiahao and Fu, Lanke Frank Tarimo and Fallon, Maurice},

journal={International Journal of Robotics Research},

year={2025},

}

Contact

We encourage you to pose any issue in Github Issues. You can also contact us via email.

License

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License and is intended for non-commercial academic use. If you are interested in using the dataset for commercial purposes please contact us via email.